New research from the University of Glasgow is unlocking the future of eye-tracking technology for smartphones.

By adjusting the size, shape, and position of interactive elements, researchers have found ways to enhance the accuracy and reliability of gaze-based controls. These insights could help device manufacturers and app developers create a seamless, hands-free mobile experience for users in a wider range of environments.

Careful placement of interactive elements which avoid the top and bottom edges of the screen can also improve performance, the team found. They also identified the ideal ‘visual angle’ that maximises the effectiveness of users’ gaze.

The recommendations could help device manufacturers and app developers unlock the full potential of eye-tracking as a reliable method for interacting with screens in a wider range of situations.

Apple have recently enabled eye-tracking as an accessibility option on iOS devices with front-facing cameras, but currently it only works in very specific conditions. Users need to put their devices down and keep them still in order for the tracking to remain accurate.

The research is set to be presented as a paper at the CHI conference in Yokohama, Japan, at the end of April. Dr Mohamed Khamis, who leads the University of Glasgow’s research on gaze-based interaction, supervised the paper’s lead author, Omar Namnakani, a postgraduate researcher at the School of Computing Science.

Dr Khamis said: “Apple’s eye-tracking function has helped disabled people use touch-free interactions with their devices more comfortably and effectively, but for the moment it only works in very limited circumstances.

“For several years now, we’ve been looking at ways in which eye-tracking could become an ‘always on’ feature that can be used in any environment, from sitting on bumpy train cars to night-time walks in the rain. It could even help in clinical settings, where doctors might need to keep their gloved hands sterile while interacting with mobile devices in an operating theatre.”

The paper builds on previous research from the Glasgow group, which has also provided insights into the most effective uses of different methods of gaze interaction.

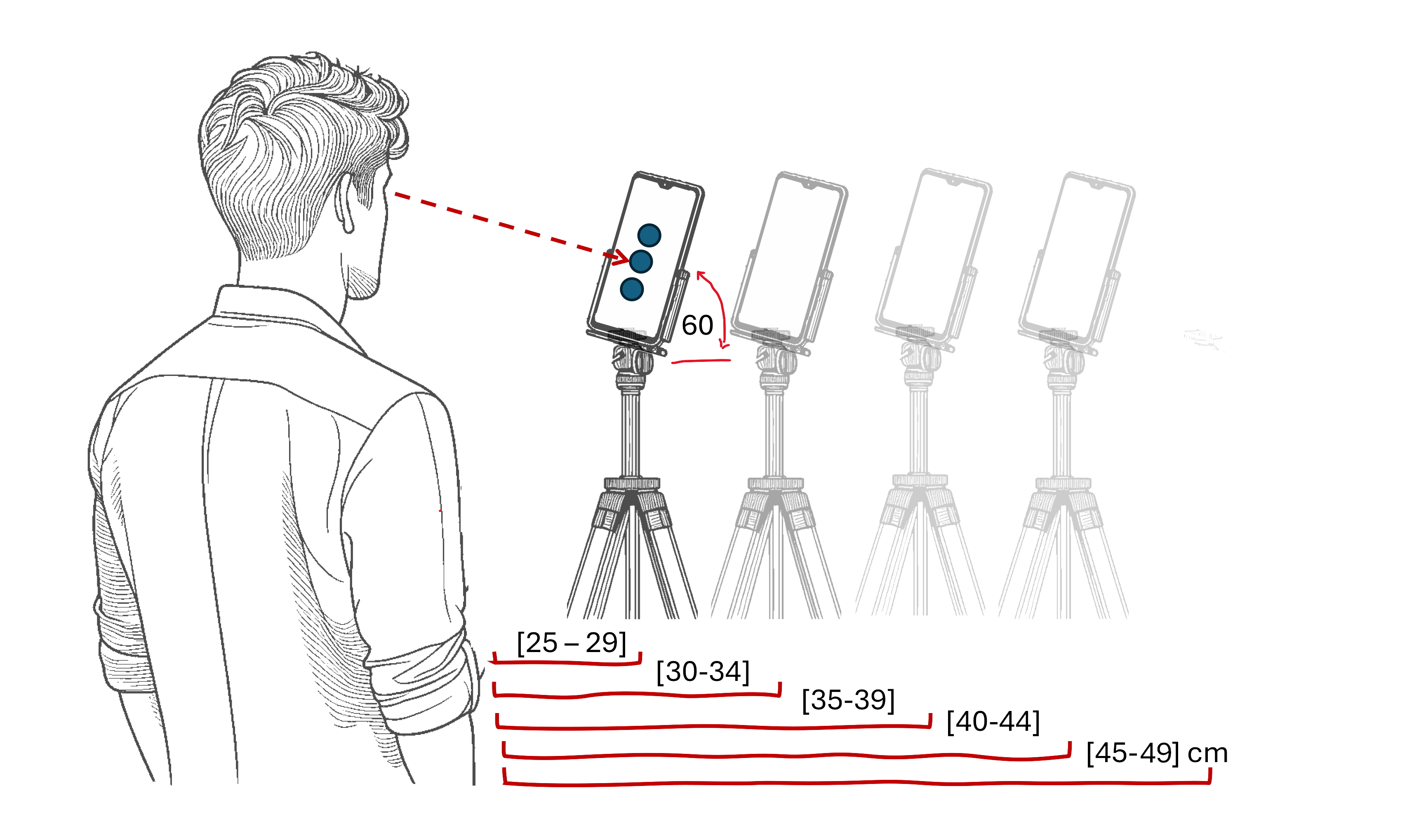

Their recommendations are based on lab tests which enlisted 24 volunteers aged between 22 and 44. The study participants had their gaze tracked by an iPhone X across a series of situations which varied the size, shape and position of onscreen icons, and users’ distance from the screen.

They found that the ‘sweet spot’ for the accuracy of eye-tracking was when the onscreen targets took up four degrees of volunteers’ visual field, reaching up to 70% accuracy at typical distances between the device and users’ eyes without taking up too much space on the screen.

They found that eye-tracking accuracy decreased as the phone was moved towards users’ eyes from the maximum distance of 49cm to the closest point of 25cm.

They also noted that the phone’s cameras were much better at picking up a side-to-side motion of the eyes than up-and-down, and that adding an extra invisible area around elements could improve accuracy.

Mr Namnakani said: “Eye-tracking is a very promising technique to allow people to interact with phones without using their hands, but at the moment it’s held back by the challenges of ensuring that people’s gaze can be followed accurately when they are on the move.

“Our new research has helped us provide a comprehensive set of recommendations for software and hardware developers to consider when building eye-tracking systems. We hope that our work will help eye-tracking become a more practical and widely-adopted feature in the years to come.”

Dr Khamis added: “Ultimately, our work aims to make gaze interaction a first-class input modality – something that is as useful as touch for interacting with devices. One of the ways we’re keen to build on this research going forward is to look at the ways in which eye-tracking targets on screens might adapt to distance – so targets might reduce in size as your phone gets closer to your face and expand as it moves farther away. We’re in the process of seeking further funding to expand the scope of our research.”

The team’s paper, titled ‘Stretch Gaze Targets Out: Experimenting with Target Sizes for Gaze-Enabled Interfaces on Mobile Devices’, will be presented at the CHI Conference in Yokohama, Japan, on Tuesday, April 29th.